Let’s import the packages we installed a few minutes ago, and some other helpful packages for later use:

using CsvHelper;

using HtmlAgilityPack;

using System.IO;

using System.Collections.Generic;

using System.Globalization;

Outside our Main function, you will create a public class for your table of contents titles.

public class Row

{

public string Title {get; set;}

}

Now, coming back to the Main function, you need to load the page youwish to scrape. As I mentioned before, we will look at what Wikipedia is writing about Greece!

HtmlWeb web = new HtmlWeb();

HtmlDocument doc = web.Load("https://en.wikipedia.org/wiki/Greece");

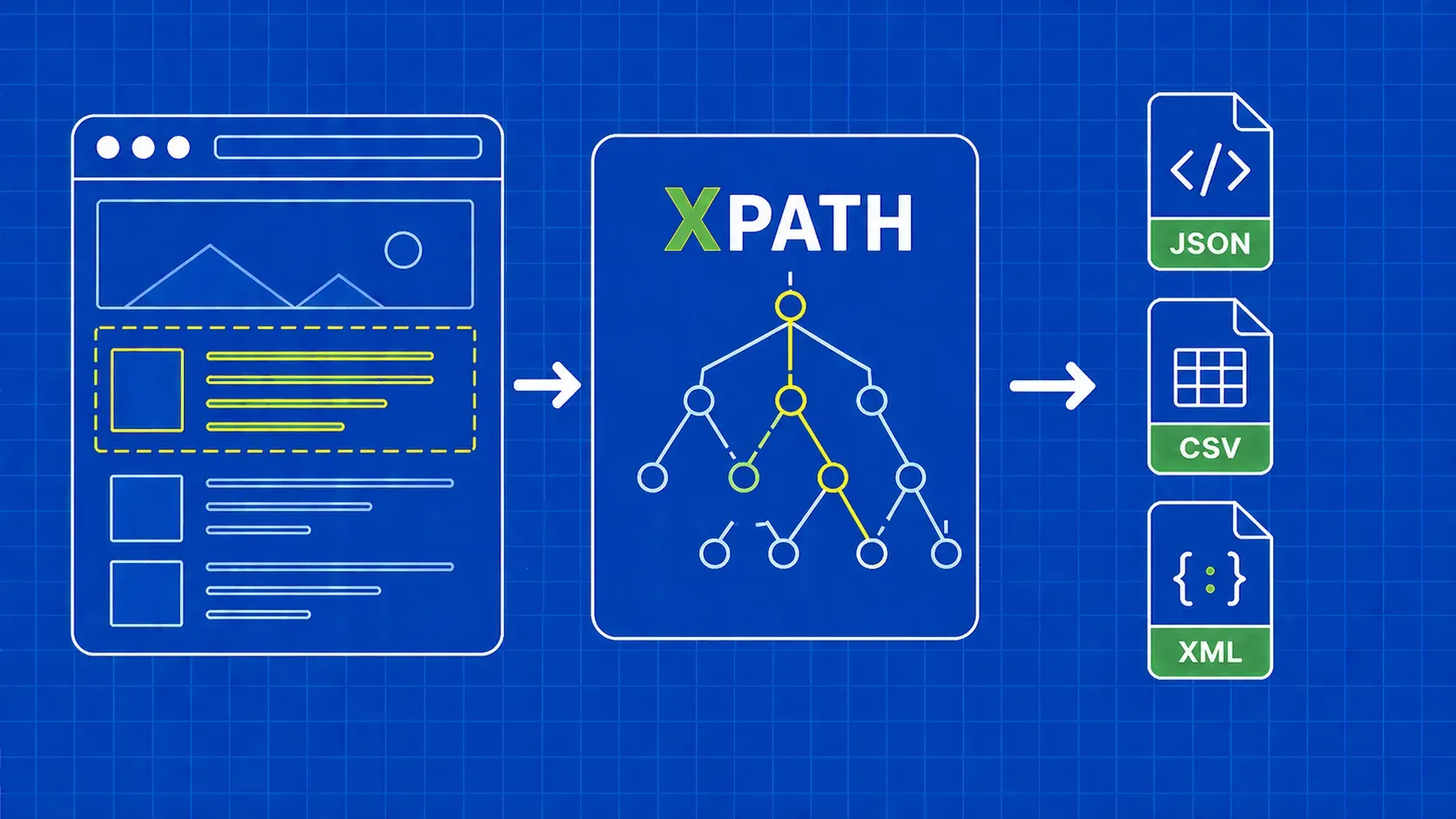

Our next step is to parse and select the nodes containing the information you are looking for, which is located in the span tags with the class toctext.

varHeaderNames = doc.DocumentNode.SelectNodes("//span[@class='toctext']");

What should you do with this information now? Let’s store it in a .csv file for later use. To do that, you first need to iterate over each node we extracted earlier and store its text into a list.

CsvHelper will do the rest of the job, creating and writing the extracted information into a file.

var titles = new List<Row>();

foreach (var item in HeaderNames)

{

titles.Add(new Row { Title = item.InnerText});

}

using (var writer = new StreamWriter("your_path_here/example.csv"))

using (var csv = new CsvWriter(writer, CultureInfo.InvariantCulture))

{

csv.WriteRecords(titles);

}